Are you looking for an answer to the topic “How do I use sqoop in cloudera?“? We answer all your questions at the website Chiangmaiplaces.net in category: +100 Marketing Blog Post Topics & Ideas. You will find the answer right below.

$ sqoop help usage: sqoop COMMAND [ARGS] Available commands: codegen Generate code to interact with database records create-hive-table Import a table definition into Hive eval Evaluate a SQL statement and display the results export Export an HDFS directory to a database table help List available commands import Import …Sqoop uses MapReduce to import and export the data, which provides parallel operation as well as fault tolerance. This document describes how to get started using Sqoop to move data between databases and Hadoop and provides reference information for the operation of the Sqoop command-line tool suite.

- Step 1: Configure a Repository.

- Step 2: Install JDK.

- Step 3: Install Cloudera Manager Server.

- Step 4: Install Databases. Install and Configure MariaDB. Install and Configure MySQL. Install and Configure PostgreSQL. …

- Step 5: Set up the Cloudera Manager Database.

- Step 6: Install CDH and Other Software.

- Step 7: Set Up a Cluster.

- Use secure shell to log in to a remote host in your CDH cluster where a Sqoop client is installed: ssh <user_name>@<remote_host> …

- After you’ve logged in to the remote host, check to make sure you have permissions to run the Sqoop client by using the following command in your terminal window: sqoop version.

- Step 1: Verifying JAVA Installation. …

- Step 2: Verifying Hadoop Installation. …

- Step 3: Downloading Sqoop. …

- Step 4: Installing Sqoop. …

- Step 5: Configuring bashrc. …

- Step 6: Configuring Sqoop. …

- Step 7: Download and Configure mysql-connector-java. …

- Step 8: Verifying Sqoop.

Table of Contents

How do I use Sqoop?

$ sqoop help usage: sqoop COMMAND [ARGS] Available commands: codegen Generate code to interact with database records create-hive-table Import a table definition into Hive eval Evaluate a SQL statement and display the results export Export an HDFS directory to a database table help List available commands import Import …

What is cloudera Sqoop?

Sqoop uses MapReduce to import and export the data, which provides parallel operation as well as fault tolerance. This document describes how to get started using Sqoop to move data between databases and Hadoop and provides reference information for the operation of the Sqoop command-line tool suite.

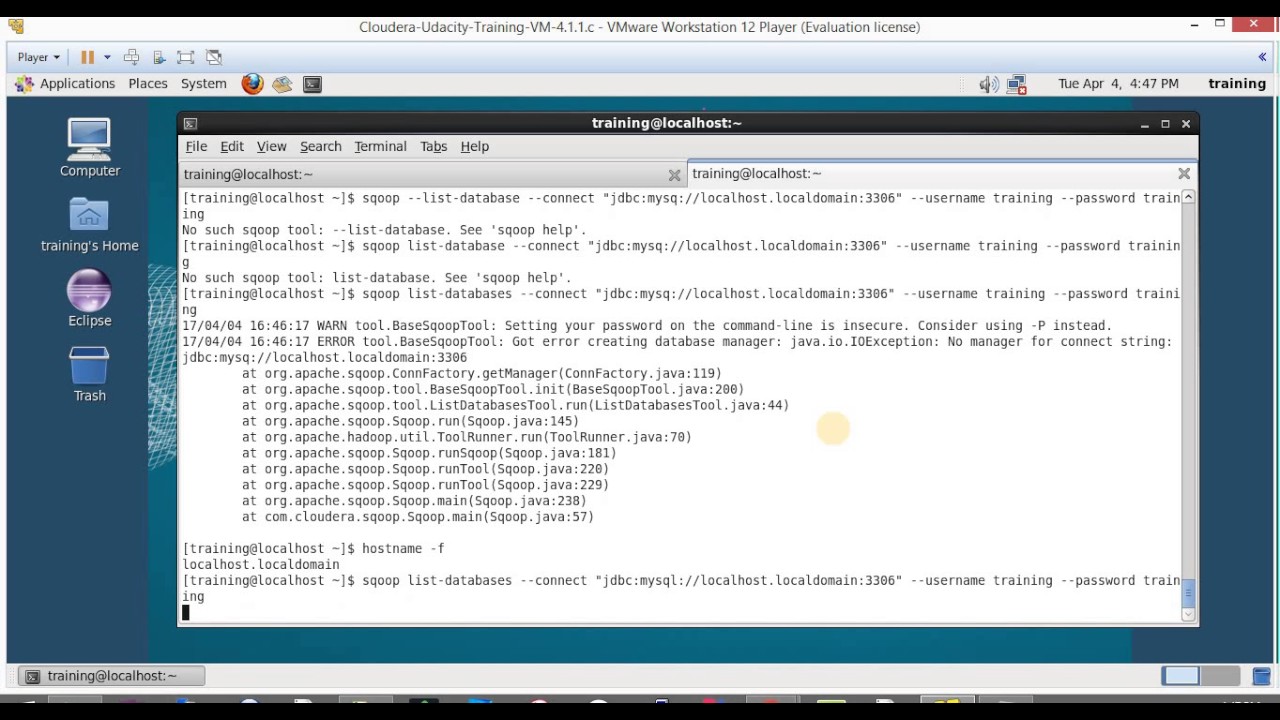

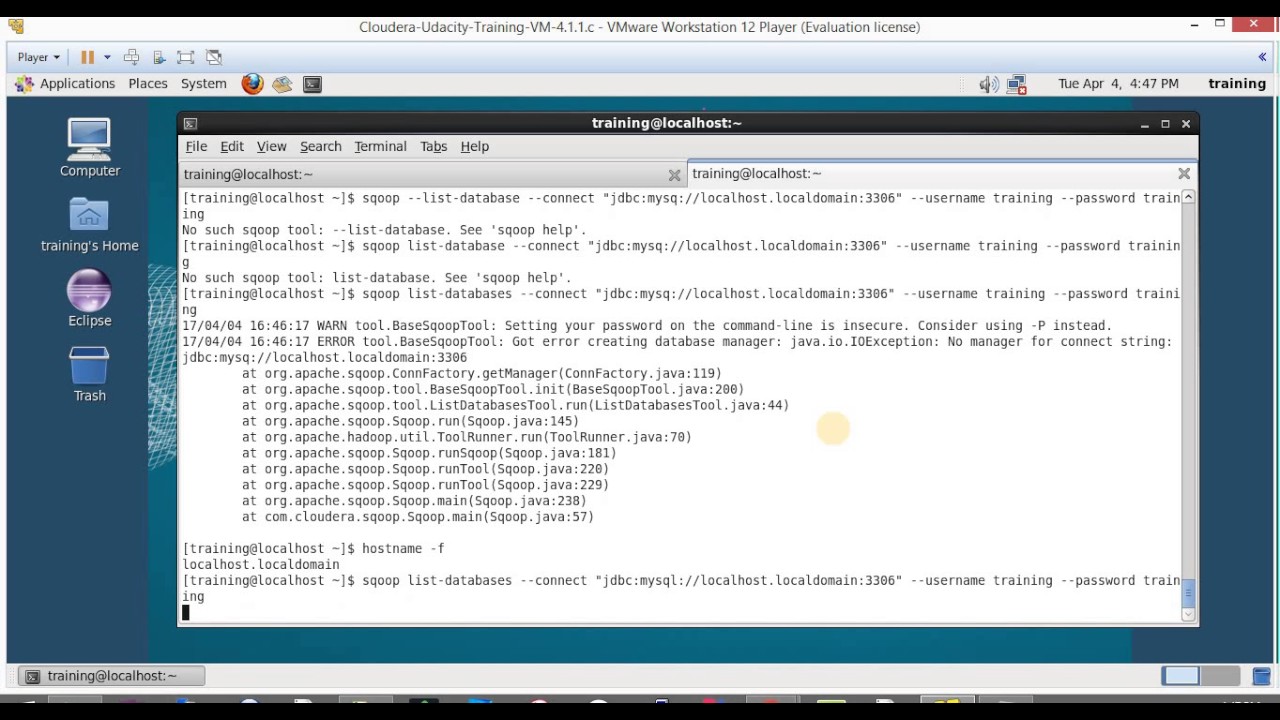

Apache Sqoop Tutorial – Hands-on on Cloudera VM

Images related to the topicApache Sqoop Tutorial – Hands-on on Cloudera VM

How do I connect to Sqoop?

- Use secure shell to log in to a remote host in your CDH cluster where a Sqoop client is installed: ssh <user_name>@<remote_host> …

- After you’ve logged in to the remote host, check to make sure you have permissions to run the Sqoop client by using the following command in your terminal window: sqoop version.

How do I start Sqoop in Hadoop?

- Step 1: Verifying JAVA Installation. …

- Step 2: Verifying Hadoop Installation. …

- Step 3: Downloading Sqoop. …

- Step 4: Installing Sqoop. …

- Step 5: Configuring bashrc. …

- Step 6: Configuring Sqoop. …

- Step 7: Download and Configure mysql-connector-java. …

- Step 8: Verifying Sqoop.

What is Sqoop and its working?

Sqoop is a tool designed to transfer data between Hadoop and relational database servers. It is used to import data from relational databases such as MySQL, Oracle to Hadoop HDFS, and export from Hadoop file system to relational databases.

How does Sqoop work with Hadoop?

The Sqoop Export tool exports the set of files from the Hadoop Distributed File System back to the Relational Database. The files which are given as an input to the Sqoop contain records. These records are called as rows in a table.

Which platform is supported by Sqoop?

…

Sqoop.

| Developer(s) | Apache Software Foundation |

|---|---|

| Final release | 1.4.7 / December 6, 2017 |

| Repository | Sqoop Repository |

| Written in | Java |

| Operating system | Cross-platform |

See some more details on the topic How do I use sqoop in cloudera? here:

Sqoop Tutorial: Your Guide to Managing Big Data on Hadoop …

One such discipline centers around Sqoop, which is a tool in the Hadoop ecosystem used to load data from …

Sqoop – MySql to HDFS in Cloudera VM – Prwatech

1.open cloudera in Vmware and open terminal · 2.Login to mysql using following command: mysql -u root -p · 3. Type show databases; · 4. · 5. · 6. · 7.

How does Sqoop export work?

The Sqoop export tool is used for exporting a set of files from the Hadoop Distributed File System back to the RDBMS. For performing export, the target table must exist on the target database. The files given as an input to Apache Sqoop contain the records, which are called as rows in the table.

What is the default database of Apache Sqoop?

Yes, MySQL is the default database. To learn Sqoop List Databases in detail, follow this link. Que 7. How will you list all the columns of a table using Apache Sqoop?

How do I write a query in Sqoop?

Apache Sqoop can import the result set of the arbitrary SQL query. Rather than using the arguments –table, –columns and –where, we can use –query argument for specifying a SQL statement. Note: While importing the table via the free-form query, we have to specify the destination directory with the –target-dir argument.

Can Sqoop run without Hadoop?

You cannot run sqoop commands without the Hadoop libraries.

How do I set the mapper in Sqoop?

Sqoop jobs use 4 map tasks by default. It can be modified by passing either -m or –num-mappers argument to the job. There is no maximum limit on number of mappers set by Sqoop, but the total number of concurrent connections to the database is a factor to consider. Read more about Controlling Parallelism in Sqoop here.

sqoop basic tutorial | cloudera | hadoop | import and export using sqoop

Images related to the topicsqoop basic tutorial | cloudera | hadoop | import and export using sqoop

Is Sqoop an ETL tool?

Apache Sqoop and Apache Flume are two popular open source etl tools for hadoop that help organizations overcome the challenges encountered in data ingestion.

How does Sqoop works internally?

Sqoop uses export and import commands for transferring datasets from other databases to HDFS. Internally, Sqoop uses a map reduce program for storing datasets to HDFS. Sqoop provides automation for transferring data from various databases and offers parallel processing as well as fault tolerance.

Where is Sqoop home directory?

You can find the Sqoop lib directory in /usr/hdp/2.2. 0…/sqoop/lib where you can upload the driver.

What are Sqoop commands?

- List Table. This command lists the particular table of the database in MYSQL server. …

- Target directory. This command import table in a specific directory in HDFS. …

- Password Protection. Example:

- sqoop-eval. …

- sqoop – version. …

- sqoop-job. …

- Loading CSV file to SQL. …

- Connector.

Why is Sqoop useful?

Apache Sqoop is designed to efficiently transfer enormous volumes of data between Apache Hadoop and structured datastores such as relational databases. It helps to offload certain tasks, such as ETL processing, from an enterprise data warehouse to Hadoop, for efficient execution at a much lower cost.

What are the main features of Sqoop?

- Robust: Apache Sqoop is highly robust in nature. …

- Full Load: Using Sqoop, we can load a whole table just by a single Sqoop command. …

- Incremental Load: Sqoop supports incremental load functionality. …

- Parallel import/export: Apache Sqoop uses the YARN framework for importing and exporting the data.

What is the difference between Sqoop and Hive?

What is the difference between Apache Sqoop and Hive? I know that sqoop is used to import/export data from RDBMS to HDFS and Hive is a SQL layer abstraction on top of Hadoop.

What is full load Sqoop?

Full Load: Apache Sqoop can load the whole table by a single command. You can also load all the tables from a database using a single command. Incremental Load: Apache Sqoop also provides the facility of incremental load where you can load parts of table whenever it is updated.

What is difference between flume and Sqoop?

Sqoop is used for bulk transfer of data between Hadoop and relational databases and supports both import and export of data. Flume is used for collecting and transferring large quantities of data to a centralized data store.

What replaced Sqoop?

Apache Spark, Apache Flume, Talend, Kafka, and Apache Impala are the most popular alternatives and competitors to Sqoop.

SQOOP Import Data from MYSQL Database to HDFS in CLOUDERA

Images related to the topicSQOOP Import Data from MYSQL Database to HDFS in CLOUDERA

Which are the alternatives for Sqoop?

- Azure Data Factory.

- AWS Glue.

- Qubole.

- IBM InfoSphere DataStage.

- Amazon Redshift.

- Pentaho Data Integration.

- SnapLogic Intelligent Integration Platform (IIP)

- Adverity.

What does Sqoop stand for?

What Does Apache Sqoop Mean? Apache Sqoop (“SQL to Hadoop“) is a Java-based, console-mode application designed for transferring bulk data between Apache Hadoop and non-Hadoop datastores, such as relational databases, NoSQL databases and data warehouses.

Related searches to How do I use sqoop in cloudera?

- hive sqoop

- how do i use sqoop in cloudera manager

- how do i use sqoop in cloudera impala

- cloudera cdp sqoop

- sqoop commands

- cloudera sqoop

- how do i use sqoop in cloudera hive

- sqoop for hadoop 3

- how do i use sqoop in cloudera hadoop

- sqoop github

- how do i use sqoop in cloudera cdp

- sqoop supported databases

- how do i use sqoop in cloudera vm

- apache sqoop

Information related to the topic How do I use sqoop in cloudera?

Here are the search results of the thread How do I use sqoop in cloudera? from Bing. You can read more if you want.

You have just come across an article on the topic How do I use sqoop in cloudera?. If you found this article useful, please share it. Thank you very much.